Performance dashboard

Information on the project performance dashboard and the included metrics.

The performance dashboard helps manage labeling operations in Labelbox projects.

It reports the throughput, efficiency, and quality of the labeling process.

These analytics are reported for each individual on the project team and collectively for the project overall.

You can use filter the views to focus on specific information and dimensions.

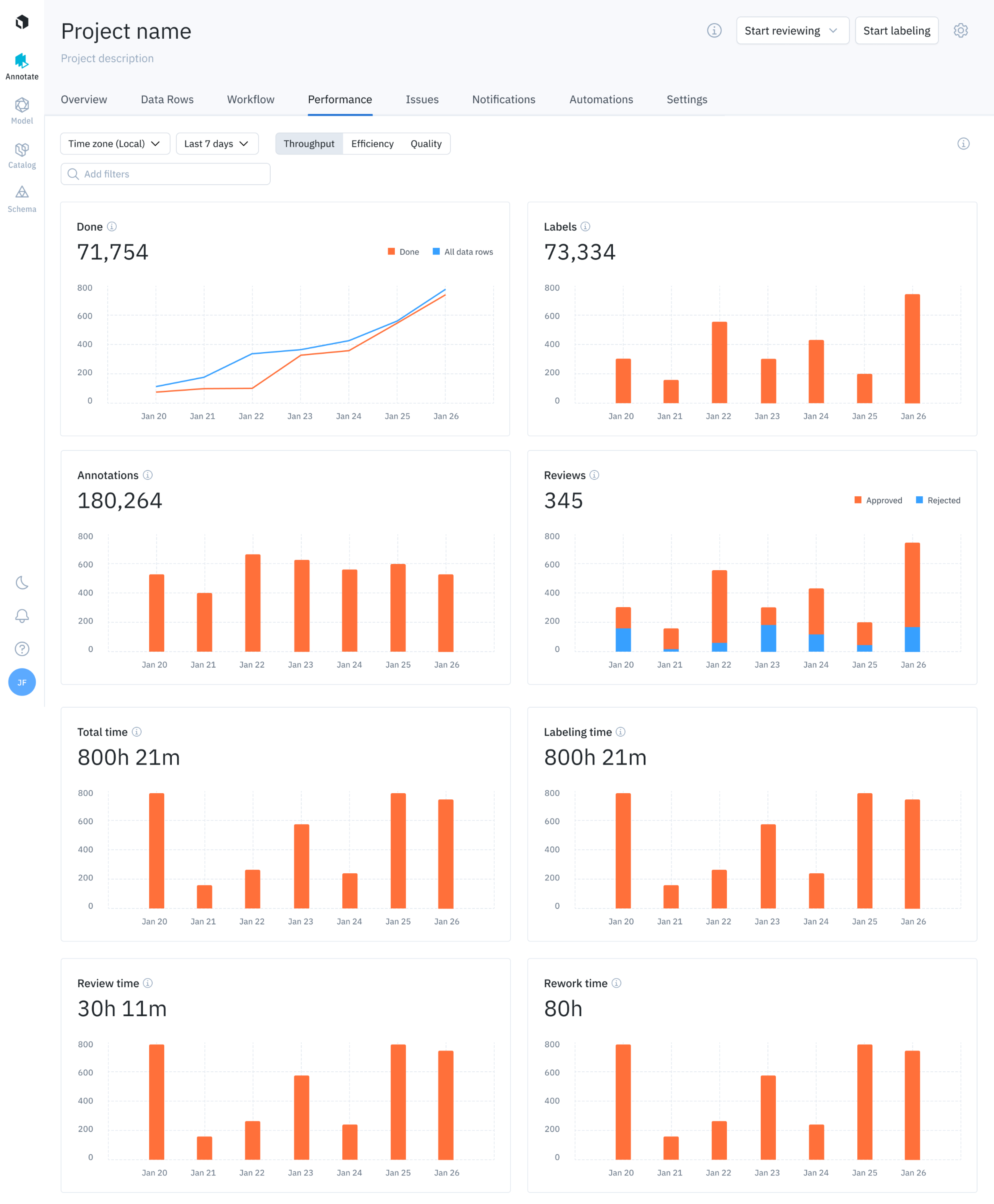

The performance dashboard displays throughput graphs

The performance of your data labeling operation can be broken down into three components: throughput, efficiency, and quality. Each component has unique views to help you understand the overall performance of your labeling operation.

Use the mouse to select and display details for any graph value.

Performance dashboard does not support custom editors.

Filters

Filters let you at the top of the performance dashboard allow you to analyze relevant subsets of data. When active, filters apply to all metric views, including Throughput, Efficiency, and Quality.

Currently, the following filters are provided:

| Filter | Description |

|---|---|

| Batch | Filters the graphs and metrics by data rows belonging to a batch. |

| Labelers | Filters the graphs and metrics by data rows that have been labeled by a specific labeler. |

| Deleted | Filters the graphs and metrics by data rows based on their deletion status. - Exclude: Excludes the data rows with labels that have been deleted. - Include: Includes all labels created in the project, including ones with deleted labels. |

Throughput view

The Throughput view provides insight into the amount of labeling work being produced and helps you answer questions like:

- How many assets were labeled in the last 30 days?

- What is the review time and rework time for labeled assets?

- What is the average amount of labeling work being produced?

The metrics shown above are available for all members of the project and for individual members.

The throughput view displays the following metrics:

| Chart | Description |

|---|---|

| Done | Displays the number of data rows with the Done status (see Workflows for status definitions) compared to the total number of data rows in the project, over a specified time period (cumulative). |

| Labels | Displays the number of labeled data rows over a specified time period (includes deleted labels by default). |

| Annotations | Displays the number of annotations created (includes deleted annotations by default). |

| Reviews | Displays the count of approve and reject actions committed in a project (for information on approve & reject actions in the review queue, see workflows). |

| Total time | Displays the total time spent labeling, reviewing, and reworking data rows. |

| Labeling time | Displays the total labeling time spent on data rows. See the flowchart below. |

| Review time | Displays the total review time spent on labeled data rows. See the flowchart below. |

| Rework time | Displays the total rework time spent on labeled data rows. See the flowchart below. Note: this includes time spent in the Rework queue and editing a label in the data row browser view editor. |

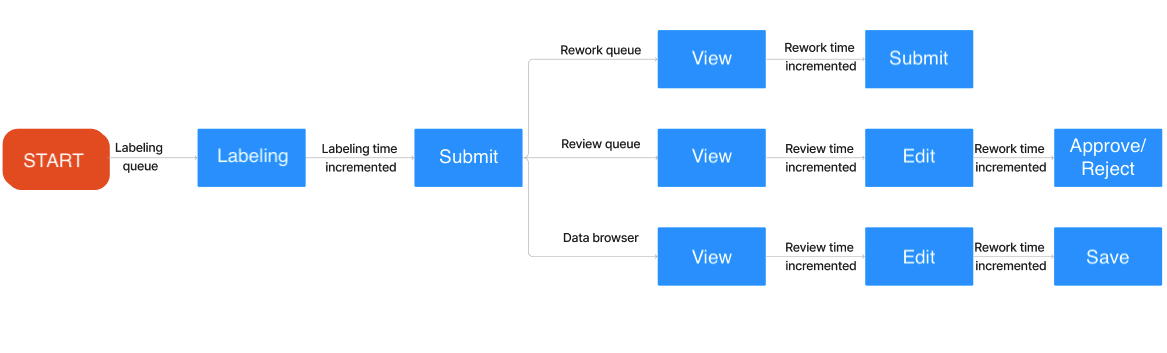

Tracking states for timing

A data row goes through many different states, which are tracked in the timer log.

This chart illustrates a data row journey through Annotate. This data flow informs the associated timer log for measuring labeling time, rework time, and review time.

Data rows go through a number of different states; the time spent in tracked as labeling time, review time, and rework time.

Labeling time

Labeling time increments when:

- The user skips or submits the asset in the labeling queue.

Review time

Review time increments when:

- The user views the asset in the data row browser view.

- The user views the asset in the review queue

Rework time

Rework time increments when:

- The user submits the asset in the rework queue

- The user navigates to the review queue, selects edit and Approves/Rejects the asset

- The user edits and saves the asset in the data row browser

Inactivity

Only active screen time is recorded. Inactivity pauses the timer.

When your system is idle for more than five (5) minutes, the timer pauses. This affects labeling time, review time, and rework time.

The timer resumes when system activity resumes.

Efficiency view

The efficiency view helps you visualize the time spent per unit of work, per labeled asset or per review. These metrics help answer questions such as:

- What is the average amount of time spent labeling an asset?

- How can I reduce time spent per labeled asset?

These metric are available for individual project members and at the project level (for all project members).

Efficiency view includes these charts:

| Chart | Description |

|---|---|

| Avg time per label | Displays the average labeling time spent per label. Avg time per label = Total labeling time / number of labels submitted |

| Avg review time | Displays the average review time per data row. Avg review time = Total review time / number of data rows reviewed |

| Avg rework time | Displays the average rework time per data row. Avg rework time = Total rework time / number of data rows reworked |

Quality view

The quality view helps you understand the accuracy and consistency of the labeling work being produced.

These metrics answer questions like:

- What is the average quality of a labeled asset?

- How can I ensure label quality is more consistent across the team?

These metrics are available for individual project members and for the project itself, which summarizes performance for all project members.

The metrics shown are available for each individual and for the project level, which summarizes performance for members of the project.

Quality view includes the following charts:

Below are the descriptions for each chart in the quality view.

| Metric | Description |

|---|---|

| Benchmark | Shows the average benchmark scores on labeled data rows within a specified time frame. |

| Benchmark distribution | Shows a histogram of benchmark scores (grouped by 10) for labeled assets within a specified time frame. |

| Consensus | Shows the average consensus score of labeled assets over a selected period. This is the average agreement score of consensus labels within themselves (for a given data row). |

| Consensus distribution | Shows a histogram of consensus scores (grouped by 10) for labeled assets plotted over the selected period. |

Graphs display the components appropriate for your project. Neither benchmarks nor consensus display when they're not enabled for your project.

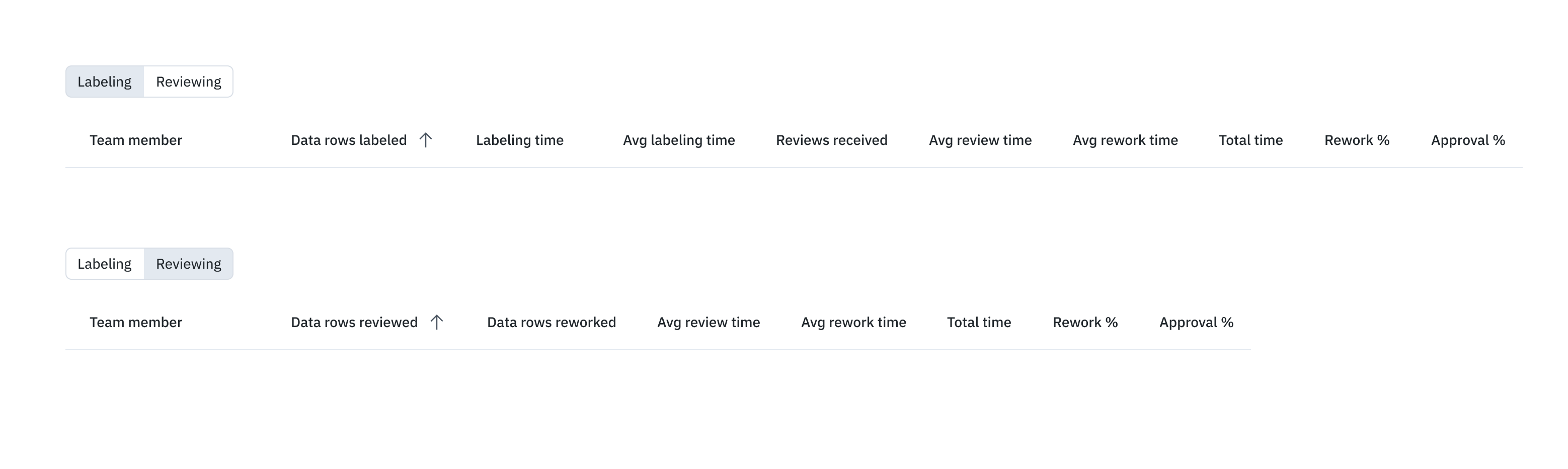

Individual member performance

You can also view individual metrics for each team member that has worked on the project. The performance metrics are separated by intent ( labeling and reviewing) and are shown as distinct view in the table.

Team members are listed individually in separate rows; members only appear if they actively performed tasks during the selected period; that is, they only show up if they labeled data rows or reviewed labels.

Labeling metrics include:

| Metric (Labeling) | Description |

|---|---|

| Labels created | Number of labels created by team member during the selected period. |

| Labels skipped | Number of labels skipped by team member during the selected period. |

| Labeling time (submitted) | Total labeling time team member spent creating and submitting labels during the selected period. |

| Labeling time (skipped) | Total labeling time team member spent working on labels that were ultimately skipped. (The Skip button was clicked.) |

| Avg time per label | Average labeling time of submitted and skipped labels. Calculated by dividing total labeling time by the number of labels submitted or skipped. Displays N/A when no labels have been submitted or skipped. |

| Reviews received | The number of Workflow review queue actions ( Approve and Reject) received during the selected period on data rows labeled by the team member. |

| Avg review time (all) | Average review time on labels created by the team member. Calculated by dividing the total review time for all team members by the number of labels that have been reviewed. |

| Avg rework time (all) | Average rework time on labels created by the team member. Calculated by dividing the total time spent by all team members and the number of labels reworked. |

| Rework time (all) | Total rework time spent on labels created by the team member in the selected period. |

| Review time (all) | Total review time spent on labels created by the team member during the selected period. |

| Rework % | Percentage of labels created by the team member that were reworked during the select time period. Calculated by dividing the number of labels created by the team member that had any rework done by the number of labels created by the team member. |

| Approval % | Percentage of data rows labeled by the team member that were approved during the selected period. Calculated by dividing the number of data rows with one or more approved labels by the number of review actions (Approve or Reject). Labels pending review are not included. |

| Benchmark score | Average agreement score with benchmark labels for labels created by the team member during the selected period. |

| Consensus score | Average agreement score with other consensus labels for labels created by the team member during the selected period. |

These metrics that appear on the Reviewing tab:

| Metric (Reviewing) | Description |

|---|---|

| Data rows reviewed | Data rows that have had review time spent by the user (per filter selection). |

| Data rows reworked | Data rows that have had rework time spent by the user. |

| Avg review time | Average review time spent by the user in the period selected. Numerator = Total review time spent by the user Denominator = Number of labels reviewed by the user |

| Avg rework time | Average rework time spent by the user in the selected period. Numerator = Total rework time spent by the user Denominator = Number of labels reworked (approved or rejected) by the user |

| Total time | Sum of review time and rework time spent by the user during the period selected. |

| Rework % | Percentage (%) of data rows reviewed by the user that have been reworked. Numerator = Number of labels that have had both rework time and review time spent by the user. Denominator = Number of data rows that have had review time spent by the user. |

| Approval % | Data rows with an approve action by the user as a percentage (%) of data rows with any review action by the user. Numerator = Number of data rows with approve action by the user (in workflow). Denominator = Number of data rows with either an approve or reject action by the user (in workflow). |

Import timer impact

Importing labels affects labeling time. When you import:

Ground truth labels, no label time is recorded on the **Data rows** tab or the performance dashboard for that label. Label time is displayed a zero (0).

When team members modify the label, that time is recorded as review time.

When imported, prelabels created via model assisted learning (MAL), no label time is recorded on the Data rows tab or the performance dashboard for that label. Label time is displayed a zero (0).

When team members open the data row in the editor, the time spent before selecting Skip or Submit is recorded as labeling time.

Updated about 3 hours ago